EDUX is a user-friendly library for solving problems with a machine learning approach.

EDUX supports a variety of machine learning algorithms including:

- Multilayer Perceptron (Neural Network): Suitable for regression and classification problems, MLPs can approximate non-linear functions.

- K Nearest Neighbors: A simple, instance-based learning algorithm used for classification and regression.

- Decision Tree: Offers visual and explicitly laid out decision making based on input features.

- Support Vector Machine: Effective for binary classification, and can be adapted for multi-class problems.

- RandomForest: An ensemble method providing high accuracy through building multiple decision trees.

Edux supports a variety of image augmentations, which can be used to increase the performance of your model.

AugmentationSequence augmentationSequence=

new AugmentationBuilder()

.addAugmentation(new ResizeAugmentation(250,250))

.addAugmentation(new ColorEqualizationAugmentation())

.build();

BufferedImage augmentedImage=augmentationSequence.applyTo(image); AugmentationSequence augmentationSequence=

new AugmentationBuilder()

.addAugmentation(new ResizeAugmentation(250,250))

.addAugmentation(new ColorEqualizationAugmentation())

.addAugmentation(new BlurAugmentation(25))

.addAugmentation(new RandomDeleteAugmentation(10,20,20))

.build()

.run(trainImagesDir,numberOfWorkers,outputDir);We run all algorithms on the same dataset and compare the results. Benchmark

The main goal of this project is to create a user-friendly library for solving problems using a machine learning approach. The library is designed to be easy to use, enabling the solution of problems with just a few lines of code.

The library currently supports:

- Multilayer Perceptron (Neural Network)

- K Nearest Neighbors

- Decision Tree

- Support Vector Machine

- RandomForest

Include the library as a dependency in your Java project file.

implementation 'io.github.samyssmile:edux:1.0.7'

<dependency>

<groupId>io.github.samyssmile</groupId>

<artifactId>edux</artifactId>

<version>1.0.7</version>

</dependency>

EDUX supports Nvidia GPU acceleration.

- Nvidia GPU with CUDA support

- CUDA Toolkit 11.8

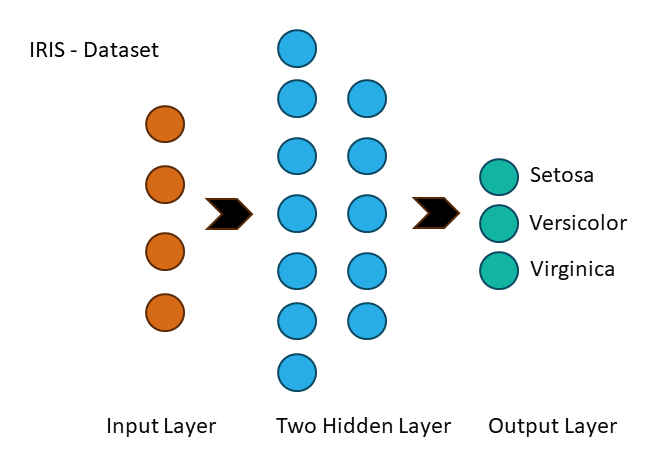

This section guides you through using EDUX to process your dataset, configure a multilayer perceptron (Multilayer Neural Network), perform training and evaluation.

A multi-layer perceptron (MLP) is a feedforward artificial neural network that generates a set of outputs from a set of input features. An MLP is characterized by several layers of input nodes connected as a directed graph between the input and output layers.

Firstly, we will load and prepare the IRIS dataset:

| sepal.length | sepal.width | petal.length | petal.width | variety |

|---|---|---|---|---|

| 5.1 | 3.5 | 1.4 | 0.2 | Setosa |

var featureColumnIndices=new int[]{0,1,2,3}; // Specify your feature columns

var targetColumnIndex=4; // Specify your target column

var dataProcessor=new DataProcessor(new CSVIDataReader());

var dataset=dataProcessor.loadDataSetFromCSV(

"path/to/your/data.csv", // Replace with your CSV file path

',', // CSV delimiter

true, // Whether to skip the header

featureColumnIndices,

targetColumnIndex

);

dataset.shuffle();

dataset.normalize();

dataProcessor.split(0.8); // Replace with your train-test split ratioExtract the features and labels for both training and test sets:

var trainFeatures=dataProcessor.getTrainFeatures(featureColumnIndices);

var trainLabels=dataProcessor.getTrainLabels(targetColumnIndex);

var testFeatures=dataProcessor.getTestFeatures(featureColumnIndices);

var testLabels=dataProcessor.getTestLabels(targetColumnIndex);var networkConfiguration=new NetworkConfiguration(

trainFeatures[0].length, // Number of input neurons

List.of(128,256,512), // Number of neurons in each hidden layer

3, // Number of output neurons

0.01, // Learning rate

300, // Number of epochs

ActivationFunction.LEAKY_RELU, // Activation function for hidden layers

ActivationFunction.SOFTMAX, // Activation function for output layer

LossFunction.CATEGORICAL_CROSS_ENTROPY, // Loss function

Initialization.XAVIER, // Weight initialization for hidden layers

Initialization.XAVIER // Weight initialization for output layer

);MultilayerPerceptron multilayerPerceptron=new MultilayerPerceptron(

networkConfiguration,

testFeatures,

testLabels

);

multilayerPerceptron.train(trainFeatures,trainLabels);

multilayerPerceptron.evaluate(testFeatures,testLabels);...

MultilayerPerceptron - Best accuracy after restoring best MLP model: 98,56%

You can find more fully working examples for all algorithms in the examples folder.

For examples we use the

Contributions are warmly welcomed! If you find a bug, please create an issue with a detailed description of the problem. If you wish to suggest an improvement or fix a bug, please make a pull request. Also checkout the Rules and Guidelines page for more information.