This YOLOv5-Paddle 🚀 notebook by GuoQuanhao presents simple train, validate and predict and export examples. YOLOv5-Paddle now supports conversion of single-precision and half-precision models in multiple formats. Contact me at github for professional support.

☑ PaddleLite ☑ PaddleInference ☑ ONNX ☑ OpenVIVO ☑ TensorRT

Install

Clone repo and install requirements.txt in a Python>=3.7.0 environment, including PaddlePaddle>=2.4.0.

git clone https://github.com/GuoQuanhao/yolov5-Paddle # clone

cd yolov5-Paddle

pip install -r requirements.txt # installTraining

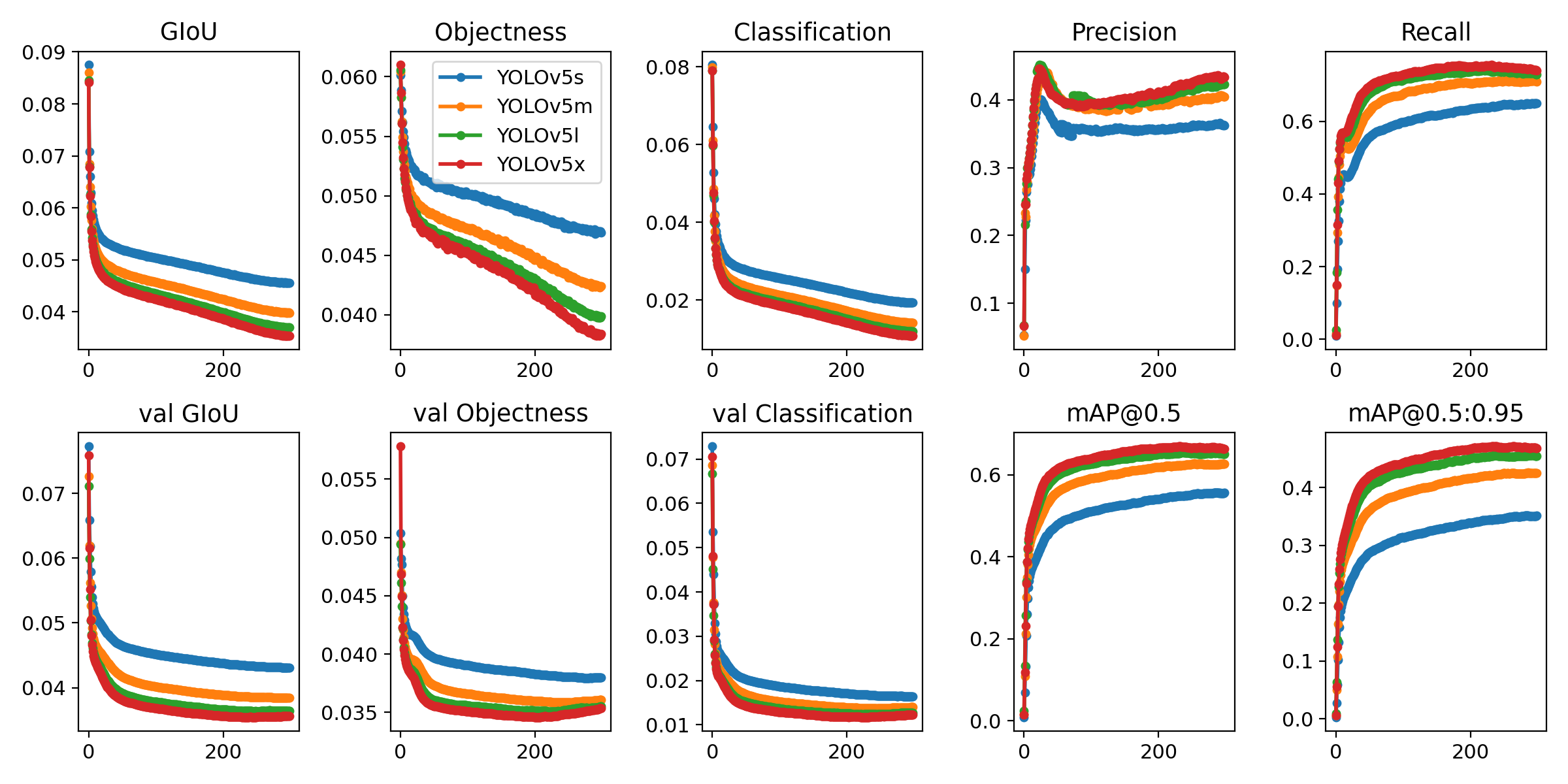

The commands below reproduce YOLOv5 COCO results. Models and datasets download automatically from the latest YOLOv5 release. Batch sizes shown for V100-16GB

# (from scratch)Single-GPU or CPU

python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5n.yaml --batch-size 128 --device ''

yolov5s 64 cpu

yolov5m 40 0

yolov5l 24 1

yolov5x 16 2

# (pretrained)Single-GPU or CPU

python train.py --data coco.yaml --epochs 300 --weights yolov5n.pdparams --batch-size 128 --device ''

yolov5s 64 cpu

yolov5m 40 0

yolov5l 24 1

yolov5x 16 2# Multi-GPU, from scratch and pretrained as above

python -m paddle.distributed.launch --gpus 0,1,2,3 train.py --weights '' --cfg yolov5n.yaml --batch-size 128 --data coco.yaml --epochs 300 --device 0,1,2,3

yolov5s 64

yolov5m 40

yolov5l 24

yolov5x 16

Evaluation

The commands below reproduce YOLOv5 COCO results. Models and datasets download automatically from the latest YOLOv5 release. Batch sizes shown for V100-16GB

# (from scratch)Single-GPU or CPU

python val.py --data coco.yaml --weights yolov5n.pdparams --img 640 --conf 0.001 --iou 0.65 --device ''

yolov5s cpu

yolov5m 0

yolov5l 1

yolov5x 2Inference

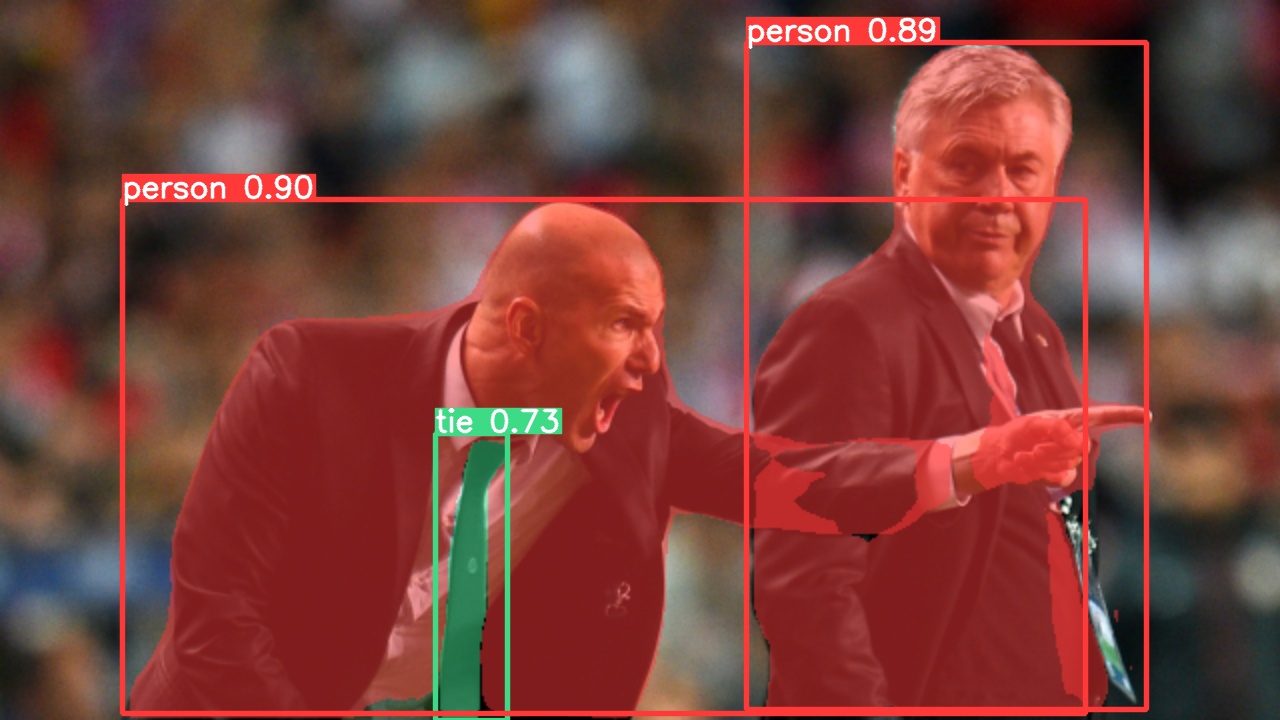

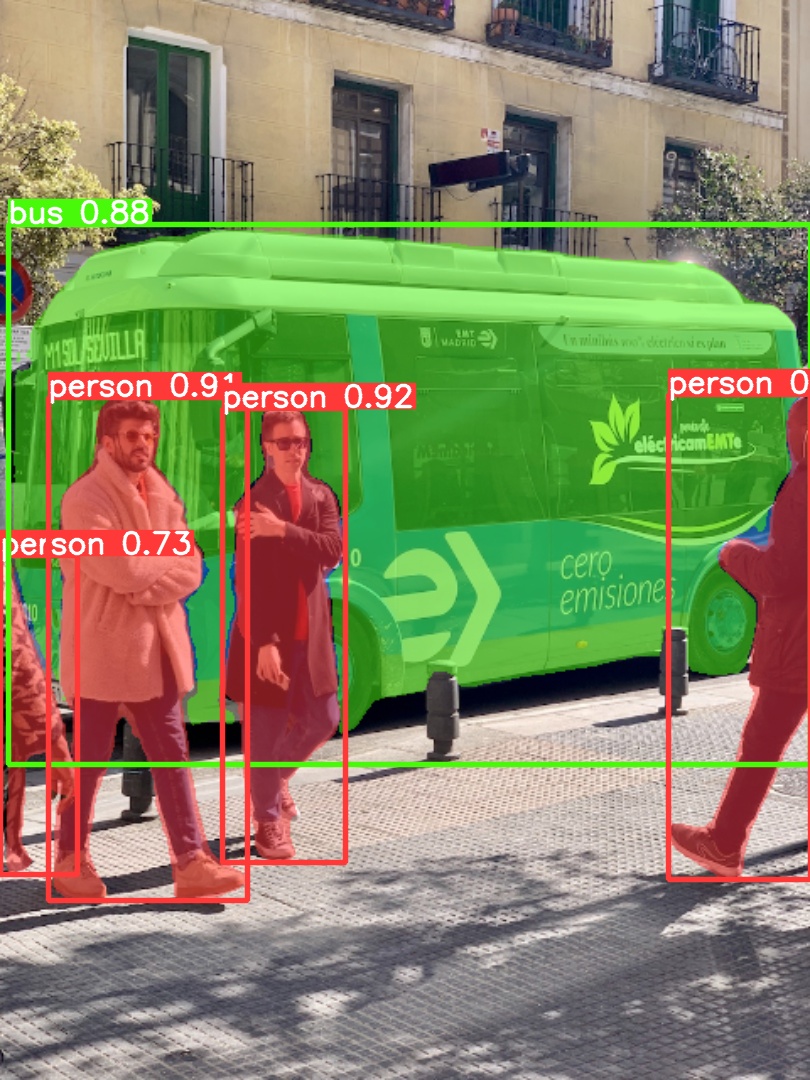

YOLOv5 PaddlePaddle inference. Models download automatically from the latest

# Model

python hubconf.py # or yolov5n - yolov5x6, customdetect.py runs inference on a variety of sources, downloading models automatically from

the Baidu Drive and saving results to runs/detect.

python detect.py --weights yolov5s.pdparams --source 0 # webcam

img.jpg # image

vid.mp4 # video

screen # screenshot

path/ # directory

list.txt # list of images

list.streams # list of streams

'path/*.jpg' # glob

'https://youtu.be/Zgi9g1ksQHc' # YouTube

'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP streambenchmark

python benchmarks.py --weights ./yolov5s.pdparams --device 0Benchmarks complete (187.81s)

Format Size (MB) mAP50-95 Inference time (ms)

0 PaddlePaddle 13.9 0.4716 9.75

1 PaddleInference 27.8 0.4716 20.82

2 ONNX 27.6 0.4717 32.23

3 TensorRT 32.2 0.4717 3.05

4 OpenVINO 27.9 0.4717 43.67

5 PaddleLite 27.8 0.4717 264.86

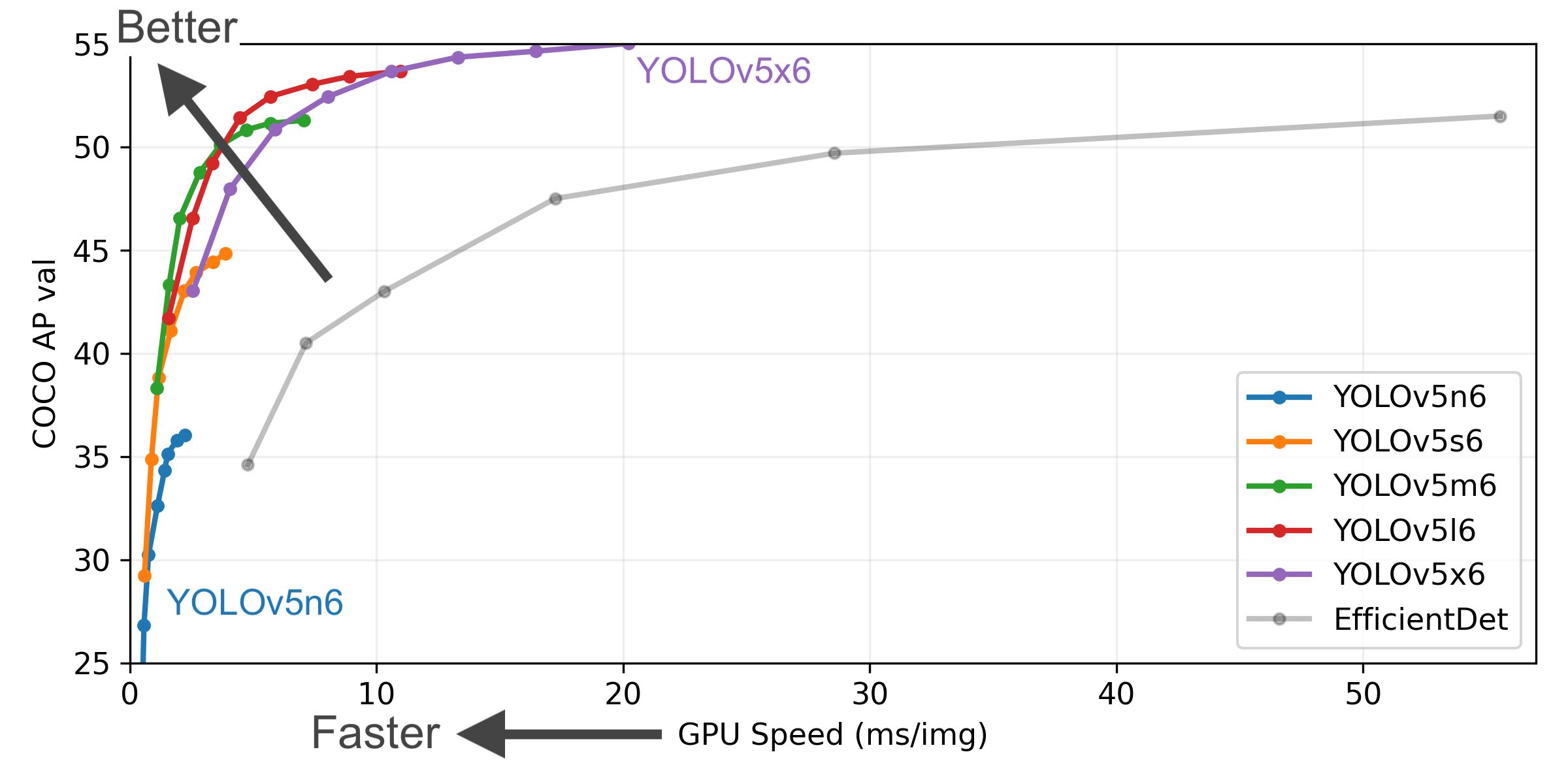

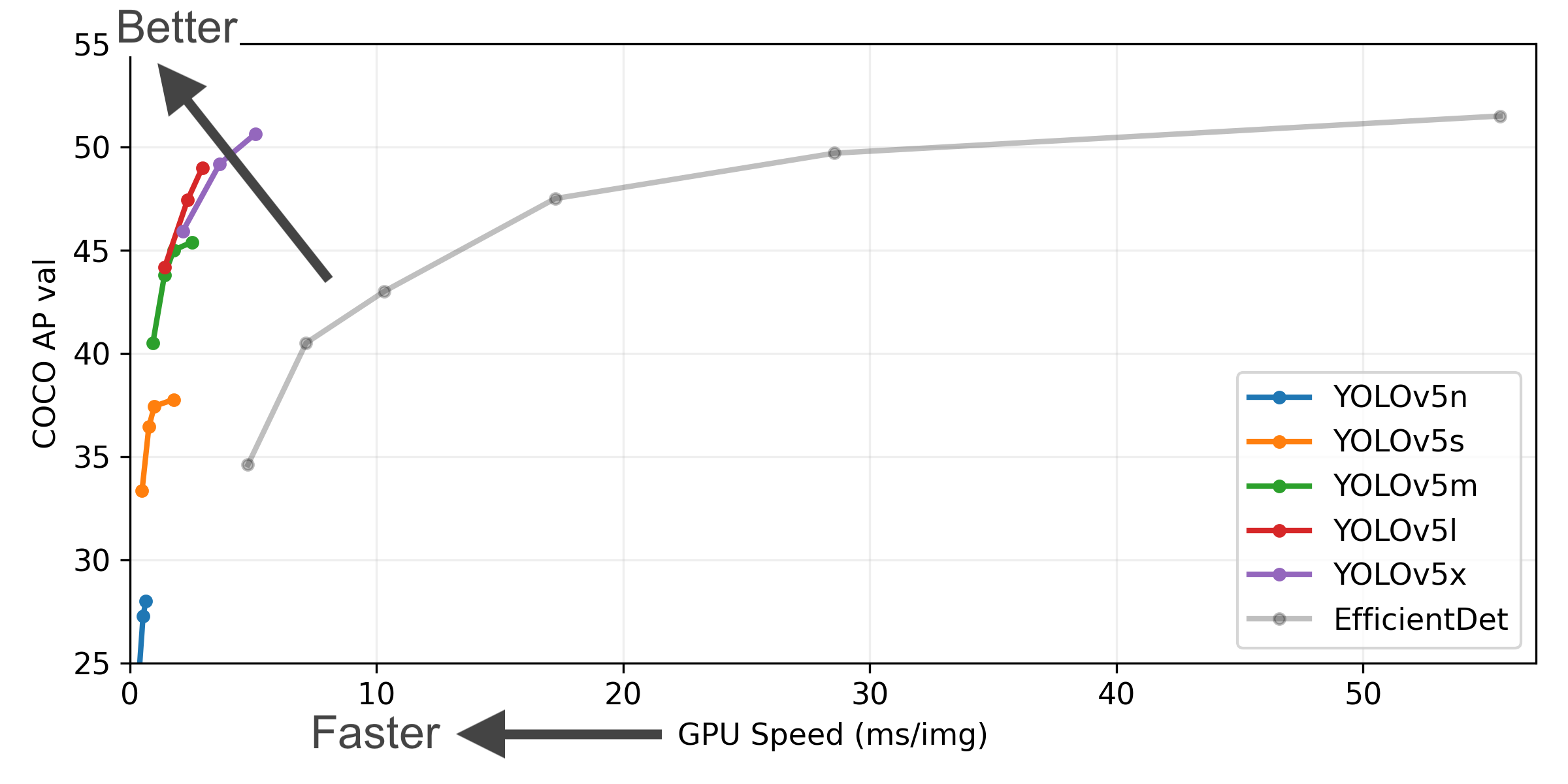

Figure Notes

- COCO AP val denotes [email protected]:0.95 metric measured on the 5000-image COCO val2017 dataset over various inference sizes from 256 to 1536.

- GPU Speed measures average inference time per image on COCO val2017 dataset using a AWS p3.2xlarge V100 instance at batch-size 32.

- Reproduce by

python val.py --task study --data coco.yaml --iou 0.7 --weights yolov5n6.pdparams yolov5s6.pdparams yolov5m6.pdparams yolov5l6.pdparams yolov5x6.pdparams

Detection Checkpoints

Accuracy, params and flops verificated by PaddlePaddle, speed is from original YOLOv5

| Model | size (pixels) |

mAPval 50-95 |

mAPval 50 |

Speed CPU b1 (ms) |

Speed V100 b1 (ms) |

Speed V100 b32 (ms) |

params (M) |

FLOPs @640 (B) |

|---|---|---|---|---|---|---|---|---|

| YOLOv5n | 640 | 28.0 | 45.7 | 45 | 6.3 | 0.6 | 1.9 | 4.5 |

| YOLOv5s | 640 | 37.4 | 56.8 | 98 | 6.4 | 0.9 | 7.2 | 16.5 |

| YOLOv5m | 640 | 45.3 | 64.1 | 224 | 8.2 | 1.7 | 21.2 | 49.0 |

| YOLOv5l | 640 | 49.0 | 67.4 | 430 | 10.1 | 2.7 | 46.5 | 109.1 |

| YOLOv5x | 640 | 50.6 | 68.8 | 766 | 12.1 | 4.8 | 86.7 | 205.7 |

| YOLOv5n6 | 1280 | 36.0 | 54.4 | 153 | 8.1 | 2.1 | 3.2 | 4.6 |

| YOLOv5s6 | 1280 | 44.8 | 63.7 | 385 | 8.2 | 3.6 | 12.6 | 16.8 |

| YOLOv5m6 | 1280 | 51.3 | 69.3 | 887 | 11.1 | 6.8 | 35.7 | 50.0 |

| YOLOv5l6 | 1280 | 53.7 | 71.3 | 1784 | 15.8 | 10.5 | 76.8 | 111.4 |

| YOLOv5x6 + [TTA] |

1280 1536 |

55.0 55.8 |

72.7 72.7 |

3136 - |

26.2 - |

19.4 - |

140.7 - |

209.8 - |

Table Notes

- All checkpoints are trained to 300 epochs with default settings. Nano and Small models use hyp.scratch-low.yaml hyps, all others use hyp.scratch-high.yaml.

- mAPval values are for single-model single-scale on COCO val2017 dataset.

Reproduce bypython val.py --data coco.yaml --img 640 --conf 0.001 --iou 0.65 - Speed averaged over COCO val images using a AWS p3.2xlarge instance. NMS times (~1 ms/img) not included.

Reproduce bypython val.py --data coco.yaml --img 640 --task speed --batch 1 - TTA Test Time Augmentation includes reflection and scale augmentations.

Reproduce bypython val.py --data coco.yaml --img 1536 --iou 0.7 --augment

Export

python export.py --weights yolov5n.pdparams --include paddleinfer onnx engine openvino paddlelite

yolov5s.pdparams

yolov5m.pdparams

yolov5l.pdparams

yolov5x.pdparamsYou can use --dynamic or --half to get dynamic dimension or half-precision model.

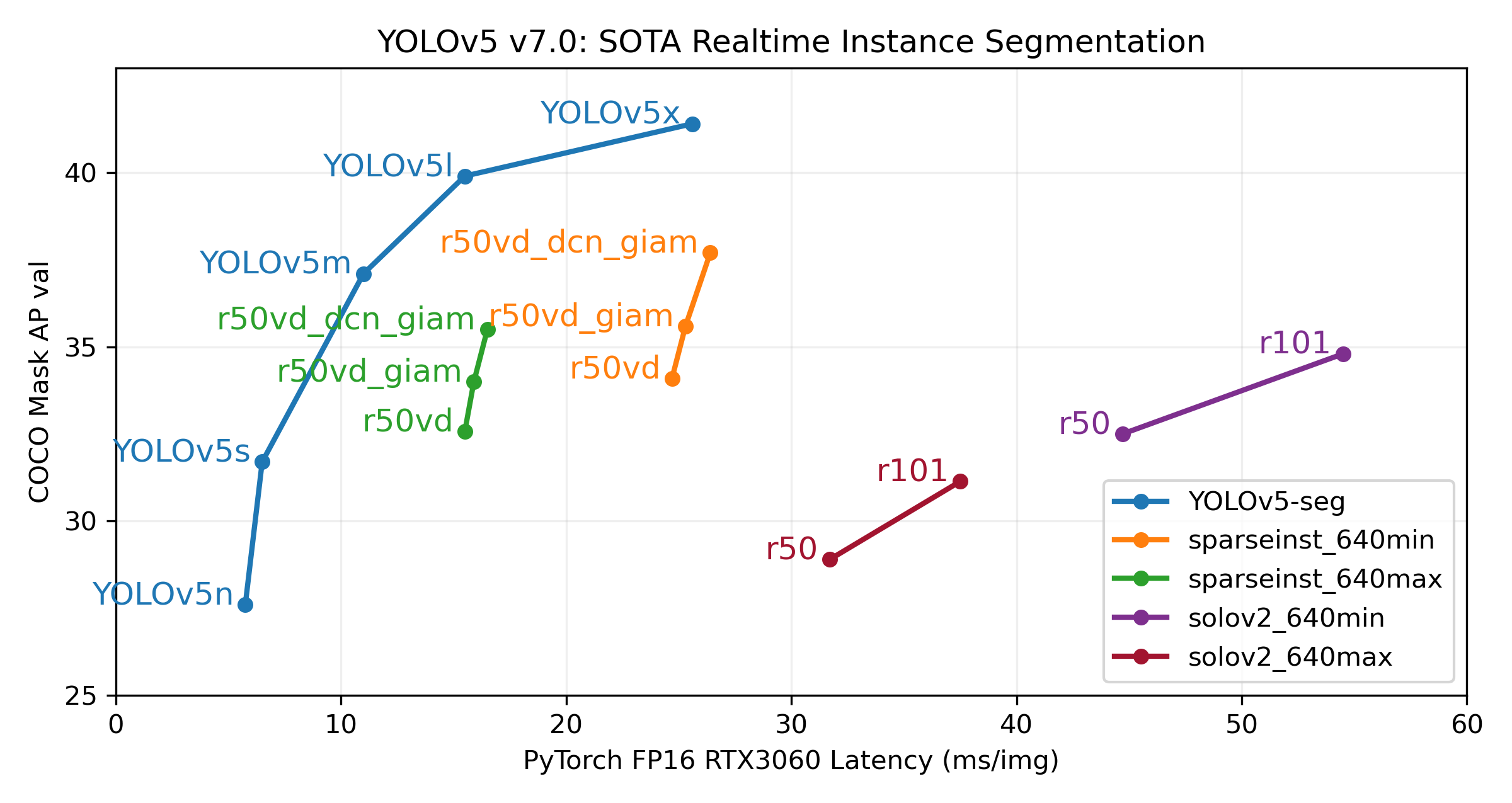

Segmentation Checkpoints

| Model | size (pixels) |

mAPbox 50-95 |

mAPmask 50-95 |

Train time 300 epochs A100 (hours) |

Speed ONNX CPU (ms) |

Speed TRT A100 (ms) |

params (M) |

FLOPs @640 (B) |

|---|---|---|---|---|---|---|---|---|

| YOLOv5n-seg | 640 | 27.2 | 23.5 | 80:17 | 62.7 | 1.2 | 2.0 | 7.1 |

| YOLOv5s-seg | 640 | 37.3 | 31.8 | 88:16 | 173.3 | 1.4 | 7.6 | 26.4 |

| YOLOv5m-seg | 640 | 44.7 | 37.5 | 108:36 | 427.0 | 2.2 | 22.0 | 70.8 |

| YOLOv5l-seg | 640 | 48.7 | 40.3 | 66:43 (2x) | 857.4 | 2.9 | 47.9 | 147.7 |

| YOLOv5x-seg | 640 | 50.7 | 41.4 | 62:56 (3x) | 1579.2 | 4.5 | 88.8 | 265.7 |

- All checkpoints are trained to 300 epochs with SGD optimizer with

lr0=0.01andweight_decay=5e-5at image size 640 and all default settings. - Accuracy values are for single-model single-scale on COCO dataset.

Reproduce bypython segment/val.py --data coco.yaml --weights yolov5s-seg.pdparams - Speed averaged over 100 inference images using a Colab Pro A100 High-RAM instance. Values indicate inference speed only (NMS adds about 1ms per image).

Reproduce bypython segment/val.py --data coco.yaml --weights yolov5s-seg.pdparams --batch 1 - Export to ONNX at FP32 and TensorRT at FP16 done with

export.py.

Reproduce bypython export.py --weights yolov5s-seg.pdparams --include engine --device 0 --half

Segmentation Usage Examples

YOLOv5 segmentation training supports auto-download COCO128-seg segmentation dataset with --data coco128-seg.yaml argument and manual download of COCO-segments dataset with bash data/scripts/get_coco.sh --train --val --segments and then python train.py --data coco.yaml.

# Single-GPU

python segment/train.py --data coco128-seg.yaml --weights yolov5s-seg.pdparams --img 640

# Multi-GPU DDP

python -m paddle.distributed.launch --gpus 0,1,2,3 segment/train.py --weights yolov5s-seg.pdparams --data coco128-seg.yaml --device 0,1,2,3Validate YOLOv5s-seg mask mAP on COCO dataset:

bash data/scripts/get_coco.sh --val --segments # download COCO val segments split (780MB, 5000 images)

python segment/val.py --weights yolov5s-seg.pdparams --data coco.yaml --img 640 # validateUse pretrained YOLOv5m-seg.pdparams to predict bus.jpg:

python segment/predict.py --weights yolov5m-seg.pdparams --data data/images/bus.jpg |

|

|---|

Export YOLOv5s-seg model to ONNX, TensorRT, etc.

# export model

python export.py --weights yolov5s-seg.pdparams --include paddleinfer onnx engine openvino paddlelite --img 640 --device 0

# Inference

python detect.py --weights yolov5s.pdparams # PaddlePaddle

yolov5s.onnx # ONNX Runtime or OpenCV DNN with --dnn

yolov5s_openvino_model # OpenVINO

yolov5s.engine # TensorRT

yolov5s_paddle_model # PaddleInference

yolov5s.nb # PaddleLiteYOLOv5 brings support for classification model training, validation and deployment!

Classification Checkpoints

We trained YOLOv5-cls classification models on ImageNet for 90 epochs using a 4xA100 instance, and we trained ResNet and EfficientNet models alongside with the same default training settings to compare. We exported all models to ONNX FP32 for CPU speed tests and to TensorRT FP16 for GPU speed tests. We ran all speed tests on Google Colab Pro for easy reproducibility.

| Model | size (pixels) |

acc top1 |

acc top5 |

Training 90 epochs 4xA100 (hours) |

Speed ONNX CPU (ms) |

Speed TensorRT V100 (ms) |

params (M) |

FLOPs @224 (B) |

|---|---|---|---|---|---|---|---|---|

| YOLOv5n-cls | 224 | 64.6 | 85.4 | 7:59 | 3.3 | 0.5 | 2.5 | 0.5 |

| YOLOv5s-cls | 224 | 71.5 | 90.2 | 8:09 | 6.6 | 0.6 | 5.4 | 1.4 |

| YOLOv5m-cls | 224 | 75.9 | 92.9 | 10:06 | 15.5 | 0.9 | 12.9 | 3.9 |

| YOLOv5l-cls | 224 | 78.0 | 94.0 | 11:56 | 26.9 | 1.4 | 26.5 | 8.5 |

| YOLOv5x-cls | 224 | 79.0 | 94.5 | 15:04 | 54.3 | 1.8 | 48.1 | 15.9 |

Table Notes (click to expand)

- All checkpoints are trained to 90 epochs with SGD optimizer with

lr0=0.001andweight_decay=5e-5at image size 224 and all default settings.

Runs logged to https://wandb.ai/glenn-jocher/YOLOv5-Classifier-v6-2 - Accuracy values are for single-model single-scale on ImageNet-1k dataset.

Reproduce bypython classify/val.py --data ../datasets/imagenet --img 224 - Speed averaged over 100 inference images using a Google Colab Pro V100 High-RAM instance.

Reproduce bypython classify/val.py --data ../datasets/imagenet --img 224 --batch 1 - Export to ONNX at FP32 and TensorRT at FP16 done with

export.py.

Reproduce bypython export.py --weights yolov5s-cls.pdparams --include engine onnx --imgsz 224

Classification Usage Examples

YOLOv5 classification training supports auto-download of MNIST, Fashion-MNIST, CIFAR10, CIFAR100, Imagenette, Imagewoof, and ImageNet datasets with the --data argument. To start training on MNIST for example use --data mnist.

# Single-GPU

python classify/train.py --model yolov5s-cls.pdparams --data cifar100 --img 224 --batch 128

# Multi-GPU DDP

python -m paddle.distributed.launch --gpus 0,1,2,3 classify/train.py --model yolov5s-cls.pdparams --data imagenet --img 224 --device 0,1,2,3Validate YOLOv5m-cls accuracy on ImageNet-1k dataset:

bash data/scripts/get_imagenet.sh --val # download ImageNet val split (6.3G, 50000 images)

python classify/val.py --weights yolov5m-cls.pdparams --data ../datasets/imagenet --img 224 # validateUse pretrained YOLOv5s-cls.pdparams to predict bus.jpg:

python classify/predict.py --weights yolov5s-cls.pdparams --data data/images/bus.jpgExport a group of trained YOLOv5s-cls, ResNet models to ONNX and TensorRT:

python export.py --weights yolov5s-cls.pdparams resnet50.pdparams --include paddleinfer, onnx, engine, openvino, paddlelite --img 224