|

StableDiffusionLDM3D

diff --git a/docs/source/en/api/pipelines/stable_diffusion/stable_diffusion_xl.md b/docs/source/en/api/pipelines/stable_diffusion/stable_diffusion_xl.md

index aedb03d51caf..d257a6e91edc 100644

--- a/docs/source/en/api/pipelines/stable_diffusion/stable_diffusion_xl.md

+++ b/docs/source/en/api/pipelines/stable_diffusion/stable_diffusion_xl.md

@@ -20,6 +20,9 @@ The abstract from the paper is:

## Tips

+- Using SDXL with a DPM++ scheduler for less than 50 steps is known to produce [visual artifacts](https://github.com/huggingface/diffusers/issues/5433) because the solver becomes numerically unstable. To fix this issue, take a look at this [PR](https://github.com/huggingface/diffusers/pull/5541) which recommends for ODE/SDE solvers:

+ - set `use_karras_sigmas=True` or `lu_lambdas=True` to improve image quality

+ - set `euler_at_final=True` if you're using a solver with uniform step sizes (DPM++2M or DPM++2M SDE)

- Most SDXL checkpoints work best with an image size of 1024x1024. Image sizes of 768x768 and 512x512 are also supported, but the results aren't as good. Anything below 512x512 is not recommended and likely won't for for default checkpoints like [stabilityai/stable-diffusion-xl-base-1.0](https://huggingface.co/stabilityai/stable-diffusion-xl-base-1.0).

- SDXL can pass a different prompt for each of the text encoders it was trained on. We can even pass different parts of the same prompt to the text encoders.

- SDXL output images can be improved by making use of a refiner model in an image-to-image setting.

diff --git a/docs/source/en/api/pipelines/unclip.md b/docs/source/en/api/pipelines/unclip.md

index 74258b7f7026..0cb5dc54dc29 100644

--- a/docs/source/en/api/pipelines/unclip.md

+++ b/docs/source/en/api/pipelines/unclip.md

@@ -7,9 +7,9 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

specific language governing permissions and limitations under the License.

-->

-# UnCLIP

+# unCLIP

-[Hierarchical Text-Conditional Image Generation with CLIP Latents](https://huggingface.co/papers/2204.06125) is by Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, Mark Chen. The UnCLIP model in 🤗 Diffusers comes from kakaobrain's [karlo]((https://github.com/kakaobrain/karlo)).

+[Hierarchical Text-Conditional Image Generation with CLIP Latents](https://huggingface.co/papers/2204.06125) is by Aditya Ramesh, Prafulla Dhariwal, Alex Nichol, Casey Chu, Mark Chen. The unCLIP model in 🤗 Diffusers comes from kakaobrain's [karlo]((https://github.com/kakaobrain/karlo)).

The abstract from the paper is following:

@@ -34,4 +34,4 @@ Make sure to check out the Schedulers [guide](../../using-diffusers/schedulers)

- __call__

## ImagePipelineOutput

-[[autodoc]] pipelines.ImagePipelineOutput

\ No newline at end of file

+[[autodoc]] pipelines.ImagePipelineOutput

diff --git a/docs/source/en/index.md b/docs/source/en/index.md

index f4cf2e2114ec..ce6e79ee44d1 100644

--- a/docs/source/en/index.md

+++ b/docs/source/en/index.md

@@ -45,4 +45,4 @@ The library has three main components:

Technical descriptions of how 🤗 Diffusers classes and methods work.

-

\ No newline at end of file

+

diff --git a/docs/source/en/installation.md b/docs/source/en/installation.md

index ee15fb56384d..3bf1d46fd0c7 100644

--- a/docs/source/en/installation.md

+++ b/docs/source/en/installation.md

@@ -50,6 +50,14 @@ pip install diffusers["flax"] transformers

+## Install with conda

+

+After activating your virtual environment, with `conda` (maintained by the community):

+

+```bash

+conda install -c conda-forge diffusers

+```

+

## Install from source

Before installing 🤗 Diffusers from source, make sure you have PyTorch and 🤗 Accelerate installed.

diff --git a/docs/source/en/optimization/opt_overview.md b/docs/source/en/optimization/opt_overview.md

index 1f809bb011ce..3a458291ce5b 100644

--- a/docs/source/en/optimization/opt_overview.md

+++ b/docs/source/en/optimization/opt_overview.md

@@ -12,6 +12,6 @@ specific language governing permissions and limitations under the License.

# Overview

-Generating high-quality outputs is computationally intensive, especially during each iterative step where you go from a noisy output to a less noisy output. One of 🤗 Diffuser's goal is to make this technology widely accessible to everyone, which includes enabling fast inference on consumer and specialized hardware.

+Generating high-quality outputs is computationally intensive, especially during each iterative step where you go from a noisy output to a less noisy output. One of 🤗 Diffuser's goals is to make this technology widely accessible to everyone, which includes enabling fast inference on consumer and specialized hardware.

-This section will cover tips and tricks - like half-precision weights and sliced attention - for optimizing inference speed and reducing memory-consumption. You'll also learn how to speed up your PyTorch code with [`torch.compile`](https://pytorch.org/tutorials/intermediate/torch_compile_tutorial.html) or [ONNX Runtime](https://onnxruntime.ai/docs/), and enable memory-efficient attention with [xFormers](https://facebookresearch.github.io/xformers/). There are also guides for running inference on specific hardware like Apple Silicon, and Intel or Habana processors.

\ No newline at end of file

+This section will cover tips and tricks - like half-precision weights and sliced attention - for optimizing inference speed and reducing memory-consumption. You'll also learn how to speed up your PyTorch code with [`torch.compile`](https://pytorch.org/tutorials/intermediate/torch_compile_tutorial.html) or [ONNX Runtime](https://onnxruntime.ai/docs/), and enable memory-efficient attention with [xFormers](https://facebookresearch.github.io/xformers/). There are also guides for running inference on specific hardware like Apple Silicon, and Intel or Habana processors.

diff --git a/docs/source/en/quicktour.md b/docs/source/en/quicktour.md

index 3cf6851e4683..c5ead9829cdc 100644

--- a/docs/source/en/quicktour.md

+++ b/docs/source/en/quicktour.md

@@ -26,7 +26,7 @@ The quicktour will show you how to use the [`DiffusionPipeline`] for inference,

-The quicktour is a simplified version of the introductory 🧨 Diffusers [notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb) to help you get started quickly. If you want to learn more about 🧨 Diffusers goal, design philosophy, and additional details about it's core API, check out the notebook!

+The quicktour is a simplified version of the introductory 🧨 Diffusers [notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb) to help you get started quickly. If you want to learn more about 🧨 Diffusers' goal, design philosophy, and additional details about its core API, check out the notebook!

@@ -76,7 +76,7 @@ The [`DiffusionPipeline`] downloads and caches all modeling, tokenization, and s

>>> pipeline

StableDiffusionPipeline {

"_class_name": "StableDiffusionPipeline",

- "_diffusers_version": "0.13.1",

+ "_diffusers_version": "0.21.4",

...,

"scheduler": [

"diffusers",

@@ -133,7 +133,7 @@ Then load the saved weights into the pipeline:

>>> pipeline = DiffusionPipeline.from_pretrained("./stable-diffusion-v1-5", use_safetensors=True)

```

-Now you can run the pipeline as you would in the section above.

+Now, you can run the pipeline as you would in the section above.

### Swapping schedulers

@@ -191,7 +191,7 @@ To use the model for inference, create the image shape with random Gaussian nois

torch.Size([1, 3, 256, 256])

```

-For inference, pass the noisy image to the model and a `timestep`. The `timestep` indicates how noisy the input image is, with more noise at the beginning and less at the end. This helps the model determine its position in the diffusion process, whether it is closer to the start or the end. Use the `sample` method to get the model output:

+For inference, pass the noisy image and a `timestep` to the model. The `timestep` indicates how noisy the input image is, with more noise at the beginning and less at the end. This helps the model determine its position in the diffusion process, whether it is closer to the start or the end. Use the `sample` method to get the model output:

```py

>>> with torch.no_grad():

@@ -210,23 +210,28 @@ Schedulers manage going from a noisy sample to a less noisy sample given the mod

-For the quicktour, you'll instantiate the [`DDPMScheduler`] with it's [`~diffusers.ConfigMixin.from_config`] method:

+For the quicktour, you'll instantiate the [`DDPMScheduler`] with its [`~diffusers.ConfigMixin.from_config`] method:

```py

>>> from diffusers import DDPMScheduler

->>> scheduler = DDPMScheduler.from_config(repo_id)

+>>> scheduler = DDPMScheduler.from_pretrained(repo_id)

>>> scheduler

DDPMScheduler {

"_class_name": "DDPMScheduler",

- "_diffusers_version": "0.13.1",

+ "_diffusers_version": "0.21.4",

"beta_end": 0.02,

"beta_schedule": "linear",

"beta_start": 0.0001,

"clip_sample": true,

"clip_sample_range": 1.0,

+ "dynamic_thresholding_ratio": 0.995,

"num_train_timesteps": 1000,

"prediction_type": "epsilon",

+ "sample_max_value": 1.0,

+ "steps_offset": 0,

+ "thresholding": false,

+ "timestep_spacing": "leading",

"trained_betas": null,

"variance_type": "fixed_small"

}

@@ -234,13 +239,13 @@ DDPMScheduler {

-💡 Notice how the scheduler is instantiated from a configuration. Unlike a model, a scheduler does not have trainable weights and is parameter-free!

+💡 Unlike a model, a scheduler does not have trainable weights and is parameter-free!

Some of the most important parameters are:

-* `num_train_timesteps`: the length of the denoising process or in other words, the number of timesteps required to process random Gaussian noise into a data sample.

+* `num_train_timesteps`: the length of the denoising process or, in other words, the number of timesteps required to process random Gaussian noise into a data sample.

* `beta_schedule`: the type of noise schedule to use for inference and training.

* `beta_start` and `beta_end`: the start and end noise values for the noise schedule.

@@ -249,9 +254,10 @@ To predict a slightly less noisy image, pass the following to the scheduler's [`

```py

>>> less_noisy_sample = scheduler.step(model_output=noisy_residual, timestep=2, sample=noisy_sample).prev_sample

>>> less_noisy_sample.shape

+torch.Size([1, 3, 256, 256])

```

-The `less_noisy_sample` can be passed to the next `timestep` where it'll get even less noisier! Let's bring it all together now and visualize the entire denoising process.

+The `less_noisy_sample` can be passed to the next `timestep` where it'll get even less noisy! Let's bring it all together now and visualize the entire denoising process.

First, create a function that postprocesses and displays the denoised image as a `PIL.Image`:

@@ -305,10 +311,10 @@ Sit back and watch as a cat is generated from nothing but noise! 😻

## Next steps

-Hopefully you generated some cool images with 🧨 Diffusers in this quicktour! For your next steps, you can:

+Hopefully, you generated some cool images with 🧨 Diffusers in this quicktour! For your next steps, you can:

* Train or finetune a model to generate your own images in the [training](./tutorials/basic_training) tutorial.

* See example official and community [training or finetuning scripts](https://github.com/huggingface/diffusers/tree/main/examples#-diffusers-examples) for a variety of use cases.

-* Learn more about loading, accessing, changing and comparing schedulers in the [Using different Schedulers](./using-diffusers/schedulers) guide.

-* Explore prompt engineering, speed and memory optimizations, and tips and tricks for generating higher quality images with the [Stable Diffusion](./stable_diffusion) guide.

+* Learn more about loading, accessing, changing, and comparing schedulers in the [Using different Schedulers](./using-diffusers/schedulers) guide.

+* Explore prompt engineering, speed and memory optimizations, and tips and tricks for generating higher-quality images with the [Stable Diffusion](./stable_diffusion) guide.

* Dive deeper into speeding up 🧨 Diffusers with guides on [optimized PyTorch on a GPU](./optimization/fp16), and inference guides for running [Stable Diffusion on Apple Silicon (M1/M2)](./optimization/mps) and [ONNX Runtime](./optimization/onnx).

diff --git a/docs/source/en/stable_diffusion.md b/docs/source/en/stable_diffusion.md

index f9407c3266c1..06eb5bf15f23 100644

--- a/docs/source/en/stable_diffusion.md

+++ b/docs/source/en/stable_diffusion.md

@@ -16,7 +16,7 @@ specific language governing permissions and limitations under the License.

Getting the [`DiffusionPipeline`] to generate images in a certain style or include what you want can be tricky. Often times, you have to run the [`DiffusionPipeline`] several times before you end up with an image you're happy with. But generating something out of nothing is a computationally intensive process, especially if you're running inference over and over again.

-This is why it's important to get the most *computational* (speed) and *memory* (GPU RAM) efficiency from the pipeline to reduce the time between inference cycles so you can iterate faster.

+This is why it's important to get the most *computational* (speed) and *memory* (GPU vRAM) efficiency from the pipeline to reduce the time between inference cycles so you can iterate faster.

This tutorial walks you through how to generate faster and better with the [`DiffusionPipeline`].

@@ -108,6 +108,7 @@ pipeline.scheduler.compatibles

diffusers.schedulers.scheduling_ddpm.DDPMScheduler,

diffusers.schedulers.scheduling_dpmsolver_singlestep.DPMSolverSinglestepScheduler,

diffusers.schedulers.scheduling_k_dpm_2_ancestral_discrete.KDPM2AncestralDiscreteScheduler,

+ diffusers.utils.dummy_torch_and_torchsde_objects.DPMSolverSDEScheduler,

diffusers.schedulers.scheduling_heun_discrete.HeunDiscreteScheduler,

diffusers.schedulers.scheduling_pndm.PNDMScheduler,

diffusers.schedulers.scheduling_euler_ancestral_discrete.EulerAncestralDiscreteScheduler,

@@ -115,7 +116,7 @@ pipeline.scheduler.compatibles

]

```

-The Stable Diffusion model uses the [`PNDMScheduler`] by default which usually requires ~50 inference steps, but more performant schedulers like [`DPMSolverMultistepScheduler`], require only ~20 or 25 inference steps. Use the [`ConfigMixin.from_config`] method to load a new scheduler:

+The Stable Diffusion model uses the [`PNDMScheduler`] by default which usually requires ~50 inference steps, but more performant schedulers like [`DPMSolverMultistepScheduler`], require only ~20 or 25 inference steps. Use the [`~ConfigMixin.from_config`] method to load a new scheduler:

```python

from diffusers import DPMSolverMultistepScheduler

@@ -155,13 +156,13 @@ def get_inputs(batch_size=1):

Start with `batch_size=4` and see how much memory you've consumed:

```python

-from diffusers.utils import make_image_grid

+from diffusers.utils import make_image_grid

images = pipeline(**get_inputs(batch_size=4)).images

make_image_grid(images, 2, 2)

```

-Unless you have a GPU with more RAM, the code above probably returned an `OOM` error! Most of the memory is taken up by the cross-attention layers. Instead of running this operation in a batch, you can run it sequentially to save a significant amount of memory. All you have to do is configure the pipeline to use the [`~DiffusionPipeline.enable_attention_slicing`] function:

+Unless you have a GPU with more vRAM, the code above probably returned an `OOM` error! Most of the memory is taken up by the cross-attention layers. Instead of running this operation in a batch, you can run it sequentially to save a significant amount of memory. All you have to do is configure the pipeline to use the [`~DiffusionPipeline.enable_attention_slicing`] function:

```python

pipeline.enable_attention_slicing()

diff --git a/docs/source/en/tutorials/autopipeline.md b/docs/source/en/tutorials/autopipeline.md

index 973a83c73eb1..fcc6f5300eab 100644

--- a/docs/source/en/tutorials/autopipeline.md

+++ b/docs/source/en/tutorials/autopipeline.md

@@ -1,3 +1,15 @@

+

+

# AutoPipeline

🤗 Diffusers is able to complete many different tasks, and you can often reuse the same pretrained weights for multiple tasks such as text-to-image, image-to-image, and inpainting. If you're new to the library and diffusion models though, it may be difficult to know which pipeline to use for a task. For example, if you're using the [runwayml/stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5) checkpoint for text-to-image, you might not know that you could also use it for image-to-image and inpainting by loading the checkpoint with the [`StableDiffusionImg2ImgPipeline`] and [`StableDiffusionInpaintPipeline`] classes respectively.

@@ -6,7 +18,7 @@ The `AutoPipeline` class is designed to simplify the variety of pipelines in

-Take a look at the [AutoPipeline](./pipelines/auto_pipeline) reference to see which tasks are supported. Currently, it supports text-to-image, image-to-image, and inpainting.

+Take a look at the [AutoPipeline](../api/pipelines/auto_pipeline) reference to see which tasks are supported. Currently, it supports text-to-image, image-to-image, and inpainting.

@@ -26,6 +38,7 @@ pipeline = AutoPipelineForText2Image.from_pretrained(

prompt = "peasant and dragon combat, wood cutting style, viking era, bevel with rune"

image = pipeline(prompt, num_inference_steps=25).images[0]

+image

```

@@ -35,12 +48,16 @@ image = pipeline(prompt, num_inference_steps=25).images[0]

Under the hood, [`AutoPipelineForText2Image`]:

1. automatically detects a `"stable-diffusion"` class from the [`model_index.json`](https://huggingface.co/runwayml/stable-diffusion-v1-5/blob/main/model_index.json) file

-2. loads the corresponding text-to-image [`StableDiffusionPipline`] based on the `"stable-diffusion"` class name

+2. loads the corresponding text-to-image [`StableDiffusionPipeline`] based on the `"stable-diffusion"` class name

Likewise, for image-to-image, [`AutoPipelineForImage2Image`] detects a `"stable-diffusion"` checkpoint from the `model_index.json` file and it'll load the corresponding [`StableDiffusionImg2ImgPipeline`] behind the scenes. You can also pass any additional arguments specific to the pipeline class such as `strength`, which determines the amount of noise or variation added to an input image:

```py

from diffusers import AutoPipelineForImage2Image

+import torch

+import requests

+from PIL import Image

+from io import BytesIO

pipeline = AutoPipelineForImage2Image.from_pretrained(

"runwayml/stable-diffusion-v1-5",

@@ -56,6 +73,7 @@ image = Image.open(BytesIO(response.content)).convert("RGB")

image.thumbnail((768, 768))

image = pipeline(prompt, image, num_inference_steps=200, strength=0.75, guidance_scale=10.5).images[0]

+image

```

@@ -67,6 +85,7 @@ And if you want to do inpainting, then [`AutoPipelineForInpainting`] loads the u

```py

from diffusers import AutoPipelineForInpainting

from diffusers.utils import load_image

+import torch

pipeline = AutoPipelineForInpainting.from_pretrained(

"stabilityai/stable-diffusion-xl-base-1.0", torch_dtype=torch.float16, use_safetensors=True

@@ -80,6 +99,7 @@ mask_image = load_image(mask_url).convert("RGB")

prompt = "A majestic tiger sitting on a bench"

image = pipeline(prompt, image=init_image, mask_image=mask_image, num_inference_steps=50, strength=0.80).images[0]

+image

```

@@ -106,6 +126,7 @@ The [`~AutoPipelineForImage2Image.from_pipe`] method detects the original pipeli

```py

from diffusers import AutoPipelineForText2Image, AutoPipelineForImage2Image

+import torch

pipeline_text2img = AutoPipelineForText2Image.from_pretrained(

"runwayml/stable-diffusion-v1-5", torch_dtype=torch.float16, use_safetensors=True

@@ -126,6 +147,7 @@ If you passed an optional argument - like disabling the safety checker - to the

```py

from diffusers import AutoPipelineForText2Image, AutoPipelineForImage2Image

+import torch

pipeline_text2img = AutoPipelineForText2Image.from_pretrained(

"runwayml/stable-diffusion-v1-5",

@@ -135,7 +157,7 @@ pipeline_text2img = AutoPipelineForText2Image.from_pretrained(

).to("cuda")

pipeline_img2img = AutoPipelineForImage2Image.from_pipe(pipeline_text2img)

-print(pipe.config.requires_safety_checker)

+print(pipeline_img2img.config.requires_safety_checker)

"False"

```

@@ -143,4 +165,6 @@ You can overwrite any of the arguments and even configuration from the original

```py

pipeline_img2img = AutoPipelineForImage2Image.from_pipe(pipeline_text2img, requires_safety_checker=True, strength=0.3)

+print(pipeline_img2img.config.requires_safety_checker)

+"True"

```

diff --git a/docs/source/en/tutorials/basic_training.md b/docs/source/en/tutorials/basic_training.md

index 3a9366baf84a..3b545cdf572e 100644

--- a/docs/source/en/tutorials/basic_training.md

+++ b/docs/source/en/tutorials/basic_training.md

@@ -31,7 +31,7 @@ Before you begin, make sure you have 🤗 Datasets installed to load and preproc

#!pip install diffusers[training]

```

-We encourage you to share your model with the community, and in order to do that, you'll need to login to your Hugging Face account (create one [here](https://hf.co/join) if you don't already have one!). You can login from a notebook and enter your token when prompted:

+We encourage you to share your model with the community, and in order to do that, you'll need to login to your Hugging Face account (create one [here](https://hf.co/join) if you don't already have one!). You can login from a notebook and enter your token when prompted. Make sure your token has the write role.

```py

>>> from huggingface_hub import notebook_login

@@ -59,7 +59,6 @@ For convenience, create a `TrainingConfig` class containing the training hyperpa

```py

>>> from dataclasses import dataclass

-

>>> @dataclass

... class TrainingConfig:

... image_size = 128 # the generated image resolution

@@ -75,6 +74,7 @@ For convenience, create a `TrainingConfig` class containing the training hyperpa

... output_dir = "ddpm-butterflies-128" # the model name locally and on the HF Hub

... push_to_hub = True # whether to upload the saved model to the HF Hub

+... hub_model_id = " /" # the name of the repository to create on the HF Hub

... hub_private_repo = False

... overwrite_output_dir = True # overwrite the old model when re-running the notebook

... seed = 0

@@ -253,10 +253,8 @@ Then, you'll need a way to evaluate the model. For evaluation, you can use the [

```py

>>> from diffusers import DDPMPipeline

>>> from diffusers.utils import make_image_grid

->>> import math

>>> import os

-

>>> def evaluate(config, epoch, pipeline):

... # Sample some images from random noise (this is the backward diffusion process).

... # The default pipeline output type is `List[PIL.Image]`

diff --git a/docs/source/en/tutorials/tutorial_overview.md b/docs/source/en/tutorials/tutorial_overview.md

index 0cec9a317ddb..85c30256ec89 100644

--- a/docs/source/en/tutorials/tutorial_overview.md

+++ b/docs/source/en/tutorials/tutorial_overview.md

@@ -20,4 +20,4 @@ After completing the tutorials, you'll have gained the necessary skills to start

Feel free to join our community on [Discord](https://discord.com/invite/JfAtkvEtRb) or the [forums](https://discuss.huggingface.co/c/discussion-related-to-httpsgithubcomhuggingfacediffusers/63) to connect and collaborate with other users and developers!

-Let's start diffusing! 🧨

\ No newline at end of file

+Let's start diffusing! 🧨

diff --git a/docs/source/en/tutorials/using_peft_for_inference.md b/docs/source/en/tutorials/using_peft_for_inference.md

index 4629cf8ba43c..2e3337519caa 100644

--- a/docs/source/en/tutorials/using_peft_for_inference.md

+++ b/docs/source/en/tutorials/using_peft_for_inference.md

@@ -10,11 +10,11 @@ an "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express o

specific language governing permissions and limitations under the License.

-->

-[[open-in-colab]]

+[[open-in-colab]]

# Inference with PEFT

-There are many adapters trained in different styles to achieve different effects. You can even combine multiple adapters to create new and unique images. With the 🤗 [PEFT](https://huggingface.co/docs/peft/index) integration in 🤗 Diffusers, it is really easy to load and manage adapters for inference. In this guide, you'll learn how to use different adapters with [Stable Diffusion XL (SDXL)](./pipelines/stable_diffusion/stable_diffusion_xl) for inference.

+There are many adapters trained in different styles to achieve different effects. You can even combine multiple adapters to create new and unique images. With the 🤗 [PEFT](https://huggingface.co/docs/peft/index) integration in 🤗 Diffusers, it is really easy to load and manage adapters for inference. In this guide, you'll learn how to use different adapters with [Stable Diffusion XL (SDXL)](../api/pipelines/stable_diffusion/stable_diffusion_xl) for inference.

Throughout this guide, you'll use LoRA as the main adapter technique, so we'll use the terms LoRA and adapter interchangeably. You should have some familiarity with LoRA, and if you don't, we welcome you to check out the [LoRA guide](https://huggingface.co/docs/peft/conceptual_guides/lora).

@@ -63,7 +63,7 @@ image

With the `adapter_name` parameter, it is really easy to use another adapter for inference! Load the [nerijs/pixel-art-xl](https://huggingface.co/nerijs/pixel-art-xl) adapter that has been fine-tuned to generate pixel art images, and let's call it `"pixel"`.

-The pipeline automatically sets the first loaded adapter (`"toy"`) as the active adapter. But you can activate the `"pixel"` adapter with the [`~diffusers.loaders.set_adapters`] method as shown below:

+The pipeline automatically sets the first loaded adapter (`"toy"`) as the active adapter. But you can activate the `"pixel"` adapter with the [`~diffusers.loaders.UNet2DConditionLoadersMixin.set_adapters`] method as shown below:

```python

pipe.load_lora_weights("nerijs/pixel-art-xl", weight_name="pixel-art-xl.safetensors", adapter_name="pixel")

@@ -86,7 +86,7 @@ image

You can also perform multi-adapter inference where you combine different adapter checkpoints for inference.

-Once again, use the [`~diffusers.loaders.set_adapters`] method to activate two LoRA checkpoints and specify the weight for how the checkpoints should be combined.

+Once again, use the [`~diffusers.loaders.UNet2DConditionLoadersMixin.set_adapters`] method to activate two LoRA checkpoints and specify the weight for how the checkpoints should be combined.

```python

pipe.set_adapters(["pixel", "toy"], adapter_weights=[0.5, 1.0])

@@ -116,7 +116,7 @@ image

Impressive! As you can see, the model was able to generate an image that mixes the characteristics of both adapters.

-If you want to go back to using only one adapter, use the [`~diffusers.loaders.set_adapters`] method to activate the `"toy"` adapter:

+If you want to go back to using only one adapter, use the [`~diffusers.loaders.UNet2DConditionLoadersMixin.set_adapters`] method to activate the `"toy"` adapter:

```python

# First, set the adapter.

@@ -134,7 +134,7 @@ image

-If you want to switch to only the base model, disable all LoRAs with the [`~diffusers.loaders.disable_lora`] method.

+If you want to switch to only the base model, disable all LoRAs with the [`~diffusers.loaders.UNet2DConditionLoadersMixin.disable_lora`] method.

```python

@@ -150,16 +150,18 @@ image

## Monitoring active adapters

-You have attached multiple adapters in this tutorial, and if you're feeling a bit lost on what adapters have been attached to the pipeline's components, you can easily check the list of active adapters using the [`~diffusers.loaders.get_active_adapters`] method:

+You have attached multiple adapters in this tutorial, and if you're feeling a bit lost on what adapters have been attached to the pipeline's components, you can easily check the list of active adapters using the [`~diffusers.loaders.LoraLoaderMixin.get_active_adapters`] method:

-```python

+```py

active_adapters = pipe.get_active_adapters()

->>> ["toy", "pixel"]

+active_adapters

+["toy", "pixel"]

```

-You can also get the active adapters of each pipeline component with [`~diffusers.loaders.get_list_adapters`]:

+You can also get the active adapters of each pipeline component with [`~diffusers.loaders.LoraLoaderMixin.get_list_adapters`]:

-```python

+```py

list_adapters_component_wise = pipe.get_list_adapters()

->>> {"text_encoder": ["toy", "pixel"], "unet": ["toy", "pixel"], "text_encoder_2": ["toy", "pixel"]}

+list_adapters_component_wise

+{"text_encoder": ["toy", "pixel"], "unet": ["toy", "pixel"], "text_encoder_2": ["toy", "pixel"]}

```

diff --git a/docs/source/en/using-diffusers/control_brightness.md b/docs/source/en/using-diffusers/control_brightness.md

index c56c757bb1bc..17c107ba57b8 100644

--- a/docs/source/en/using-diffusers/control_brightness.md

+++ b/docs/source/en/using-diffusers/control_brightness.md

@@ -1,3 +1,15 @@

+

+

# Control image brightness

The Stable Diffusion pipeline is mediocre at generating images that are either very bright or dark as explained in the [Common Diffusion Noise Schedules and Sample Steps are Flawed](https://huggingface.co/papers/2305.08891) paper. The solutions proposed in the paper are currently implemented in the [`DDIMScheduler`] which you can use to improve the lighting in your images.

diff --git a/docs/source/en/using-diffusers/controlnet.md b/docs/source/en/using-diffusers/controlnet.md

index 9af2806672be..71fd3c7a307e 100644

--- a/docs/source/en/using-diffusers/controlnet.md

+++ b/docs/source/en/using-diffusers/controlnet.md

@@ -1,3 +1,15 @@

+

+

# ControlNet

ControlNet is a type of model for controlling image diffusion models by conditioning the model with an additional input image. There are many types of conditioning inputs (canny edge, user sketching, human pose, depth, and more) you can use to control a diffusion model. This is hugely useful because it affords you greater control over image generation, making it easier to generate specific images without experimenting with different text prompts or denoising values as much.

diff --git a/docs/source/en/using-diffusers/diffedit.md b/docs/source/en/using-diffusers/diffedit.md

index 4c32eb4c482b..1c4a347e7396 100644

--- a/docs/source/en/using-diffusers/diffedit.md

+++ b/docs/source/en/using-diffusers/diffedit.md

@@ -1,3 +1,15 @@

+

+

# DiffEdit

[[open-in-colab]]

diff --git a/docs/source/en/using-diffusers/distilled_sd.md b/docs/source/en/using-diffusers/distilled_sd.md

index 7653300b92ab..2dd96d98861d 100644

--- a/docs/source/en/using-diffusers/distilled_sd.md

+++ b/docs/source/en/using-diffusers/distilled_sd.md

@@ -1,3 +1,15 @@

+

+

# Distilled Stable Diffusion inference

[[open-in-colab]]

diff --git a/docs/source/en/using-diffusers/freeu.md b/docs/source/en/using-diffusers/freeu.md

index 6c23ec754382..4f3c64096705 100644

--- a/docs/source/en/using-diffusers/freeu.md

+++ b/docs/source/en/using-diffusers/freeu.md

@@ -1,3 +1,15 @@

+

+

# Improve generation quality with FreeU

[[open-in-colab]]

diff --git a/docs/source/en/using-diffusers/kandinsky.md b/docs/source/en/using-diffusers/kandinsky.md

new file mode 100644

index 000000000000..4ca544270766

--- /dev/null

+++ b/docs/source/en/using-diffusers/kandinsky.md

@@ -0,0 +1,692 @@

+# Kandinsky

+

+[[open-in-colab]]

+

+The Kandinsky models are a series of multilingual text-to-image generation models. The Kandinsky 2.0 model uses two multilingual text encoders and concatenates those results for the UNet.

+

+[Kandinsky 2.1](../api/pipelines/kandinsky) changes the architecture to include an image prior model ([`CLIP`](https://huggingface.co/docs/transformers/model_doc/clip)) to generate a mapping between text and image embeddings. The mapping provides better text-image alignment and it is used with the text embeddings during training, leading to higher quality results. Finally, Kandinsky 2.1 uses a [Modulating Quantized Vectors (MoVQ)](https://huggingface.co/papers/2209.09002) decoder - which adds a spatial conditional normalization layer to increase photorealism - to decode the latents into images.

+

+[Kandinsky 2.2](../api/pipelines/kandinsky_v22) improves on the previous model by replacing the image encoder of the image prior model with a larger CLIP-ViT-G model to improve quality. The image prior model was also retrained on images with different resolutions and aspect ratios to generate higher-resolution images and different image sizes.

+

+This guide will show you how to use the Kandinsky models for text-to-image, image-to-image, inpainting, interpolation, and more.

+

+Before you begin, make sure you have the following libraries installed:

+

+```py

+# uncomment to install the necessary libraries in Colab

+#!pip install transformers accelerate safetensors

+```

+

+

+

+Kandinsky 2.1 and 2.2 usage is very similar! The only difference is Kandinsky 2.2 doesn't accept `prompt` as an input when decoding the latents. Instead, Kandinsky 2.2 only accepts `image_embeds` during decoding.

+

+

+

+## Text-to-image

+

+To use the Kandinsky models for any task, you always start by setting up the prior pipeline to encode the prompt and generate the image embeddings. The prior pipeline also generates `negative_image_embeds` that correspond to the negative prompt `""`. For better results, you can pass an actual `negative_prompt` to the prior pipeline, but this'll increase the effective batch size of the prior pipeline by 2x.

+

+

+

+

+```py

+from diffusers import KandinskyPriorPipeline, KandinskyPipeline

+import torch

+

+prior_pipeline = KandinskyPriorPipeline.from_pretrained("kandinsky-community/kandinsky-2-1-prior", torch_dtype=torch.float16).to("cuda")

+pipeline = KandinskyPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16).to("cuda")

+

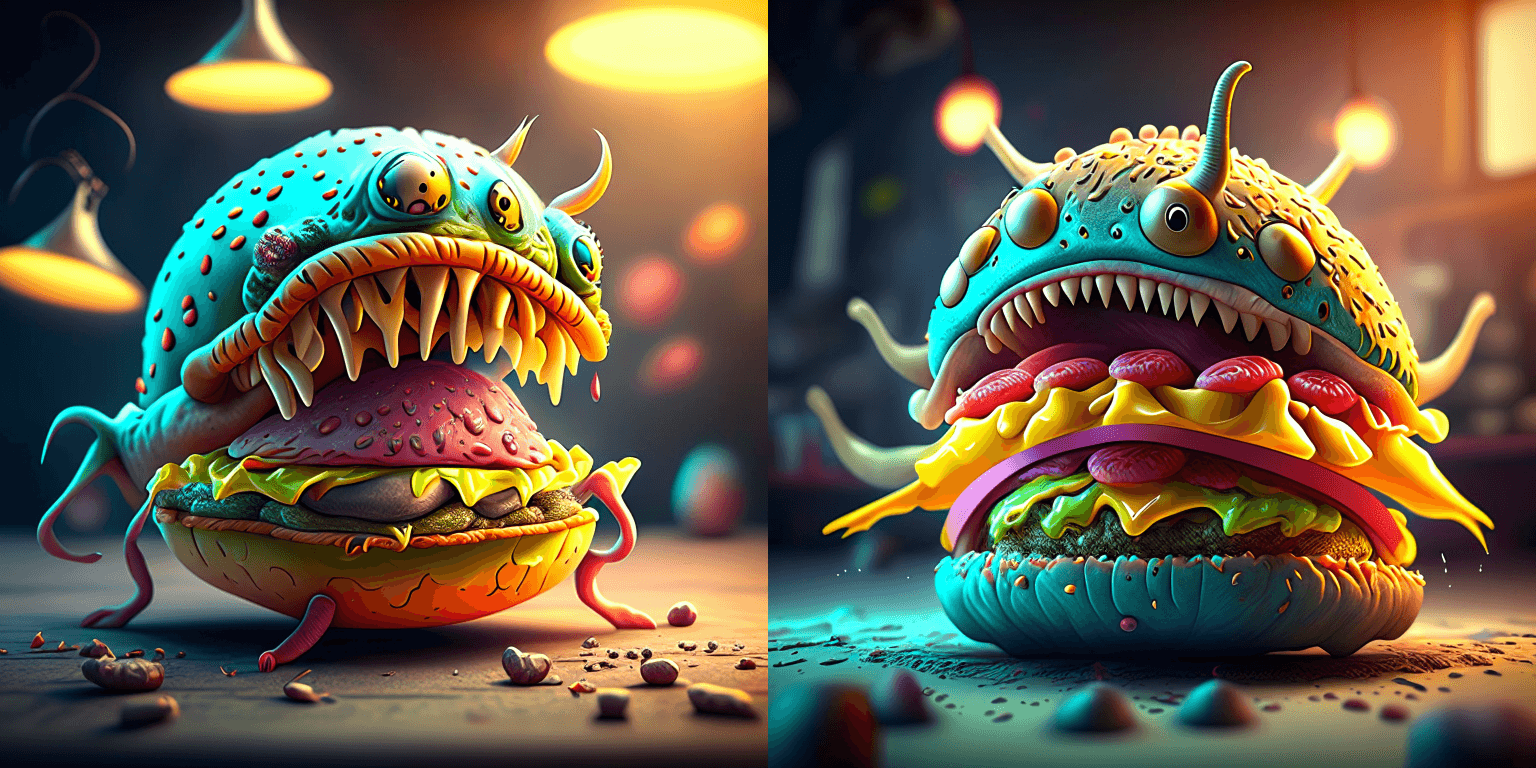

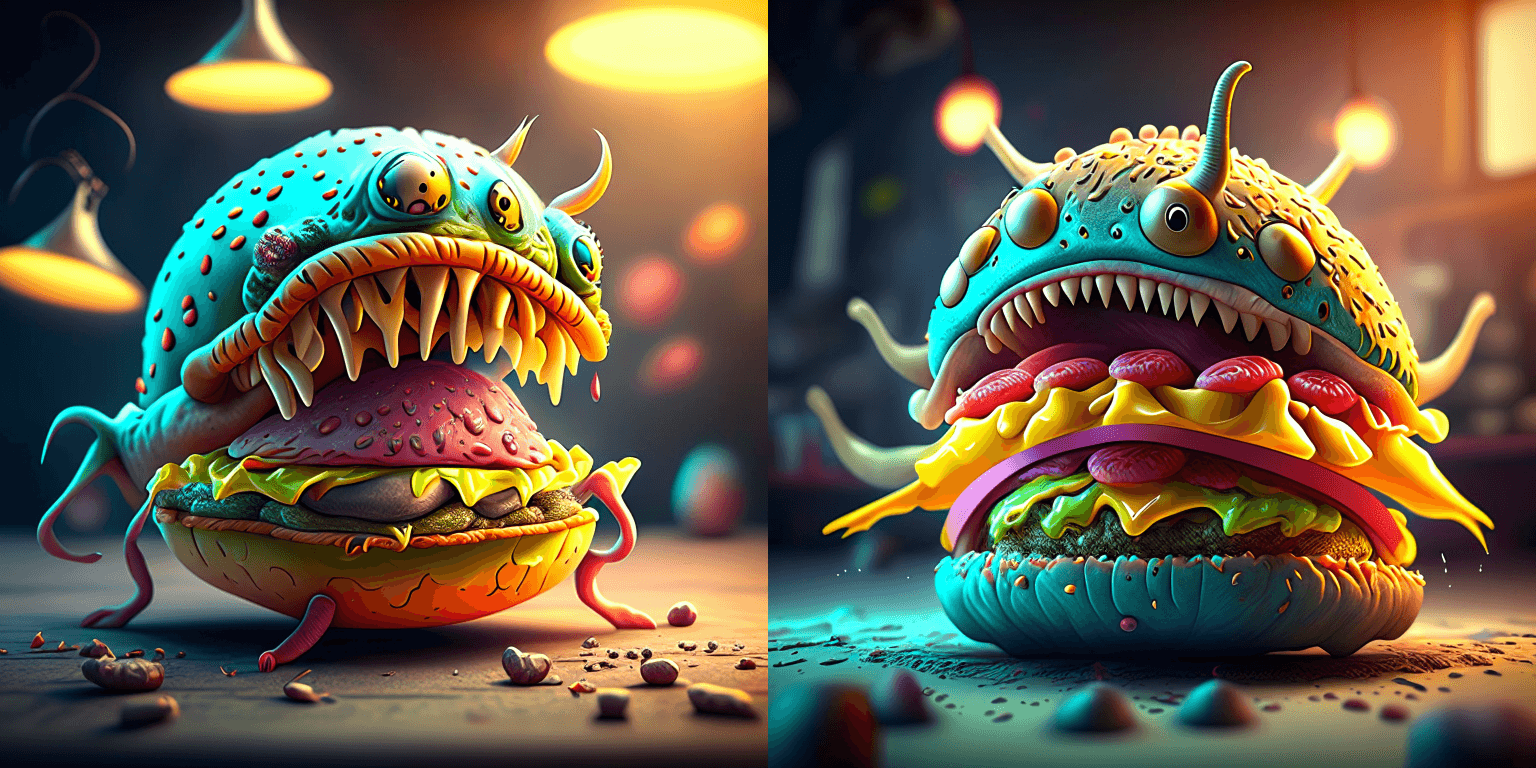

+prompt = "A alien cheeseburger creature eating itself, claymation, cinematic, moody lighting"

+negative_prompt = "low quality, bad quality" # optional to include a negative prompt, but results are usually better

+image_embeds, negative_image_embeds = prior_pipeline(prompt, negative_prompt, guidance_scale=1.0).to_tuple()

+```

+

+Now pass all the prompts and embeddings to the [`KandinskyPipeline`] to generate an image:

+

+```py

+image = pipeline(prompt, image_embeds=image_embeds, negative_prompt=negative_prompt, negative_image_embeds=negative_image_embeds, height=768, width=768).images[0]

+```

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

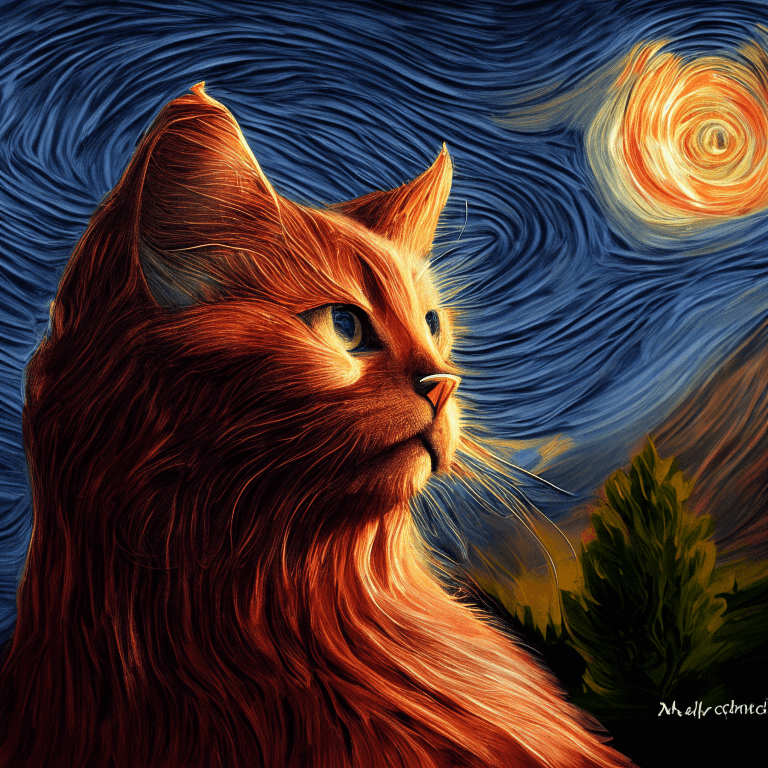

+ a cat

+

+

+

+ Van Gogh's Starry Night painting

+

+

+

+

+

+

+

+

+

+

+

+

+

+  +

+

+

+

+# Diffusers

+

+🤗 Diffusers é uma biblioteca de modelos de difusão de última geração para geração de imagens, áudio e até mesmo estruturas 3D de moléculas. Se você está procurando uma solução de geração simples ou queira treinar seu próprio modelo de difusão, 🤗 Diffusers é uma modular caixa de ferramentas que suporta ambos. Nossa biblioteca é desenhada com foco em [usabilidade em vez de desempenho](conceptual/philosophy#usability-over-performance), [simples em vez de fácil](conceptual/philosophy#simple-over-easy) e [customizável em vez de abstrações](conceptual/philosophy#tweakable-contributorfriendly-over-abstraction).

+

+A Biblioteca tem três componentes principais:

+

+- Pipelines de última geração para a geração em poucas linhas de código. Têm muitos pipelines no 🤗 Diffusers, veja a tabela no pipeline [Visão geral](api/pipelines/overview) para uma lista completa de pipelines disponíveis e as tarefas que eles resolvem.

+- Intercambiáveis [agendadores de ruído](api/schedulers/overview) para balancear as compensações entre velocidade e qualidade de geração.

+- [Modelos](api/models) pré-treinados que podem ser usados como se fossem blocos de construção, e combinados com agendadores, para criar seu próprio sistema de difusão de ponta a ponta.

+

+

diff --git a/docs/source/pt/installation.md b/docs/source/pt/installation.md

new file mode 100644

index 000000000000..52ea243ee034

--- /dev/null

+++ b/docs/source/pt/installation.md

@@ -0,0 +1,156 @@

+

+

+# Instalação

+

+🤗 Diffusers é testado no Python 3.8+, PyTorch 1.7.0+, e Flax. Siga as instruções de instalação abaixo para a biblioteca de deep learning que você está utilizando:

+

+- [PyTorch](https://pytorch.org/get-started/locally/) instruções de instalação

+- [Flax](https://flax.readthedocs.io/en/latest/) instruções de instalação

+

+## Instalação com pip

+

+Recomenda-se instalar 🤗 Diffusers em um [ambiente virtual](https://docs.python.org/3/library/venv.html).

+Se você não está familiarizado com ambiente virtuals, veja o [guia](https://packaging.python.org/guides/installing-using-pip-and-virtual-environments/).

+Um ambiente virtual deixa mais fácil gerenciar diferentes projetos e evitar problemas de compatibilidade entre dependências.

+

+Comece criando um ambiente virtual no diretório do projeto:

+

+```bash

+python -m venv .env

+```

+

+Ative o ambiente virtual:

+

+```bash

+source .env/bin/activate

+```

+

+Recomenda-se a instalação do 🤗 Transformers porque 🤗 Diffusers depende de seus modelos:

+

+

+

+```bash

+pip install diffusers["torch"] transformers

+```

+

+

+```bash

+pip install diffusers["flax"] transformers

+```

+

+

+

+## Instalação a partir do código fonte

+

+Antes da instalação do 🤗 Diffusers a partir do código fonte, certifique-se de ter o PyTorch e o 🤗 Accelerate instalados.

+

+Para instalar o 🤗 Accelerate:

+

+```bash

+pip install accelerate

+```

+

+então instale o 🤗 Diffusers do código fonte:

+

+```bash

+pip install git+https://github.com/huggingface/diffusers

+```

+

+Esse comando instala a última versão em desenvolvimento `main` em vez da última versão estável `stable`.

+A versão `main` é útil para se manter atualizado com os últimos desenvolvimentos.

+Por exemplo, se um bug foi corrigido desde o último lançamento estável, mas um novo lançamento ainda não foi lançado.

+No entanto, isso significa que a versão `main` pode não ser sempre estável.

+Nós nos esforçamos para manter a versão `main` operacional, e a maioria dos problemas geralmente são resolvidos em algumas horas ou um dia.

+Se você encontrar um problema, por favor abra uma [Issue](https://github.com/huggingface/diffusers/issues/new/choose), assim conseguimos arrumar o quanto antes!

+

+## Instalação editável

+

+Você precisará de uma instalação editável se você:

+

+- Usar a versão `main` do código fonte.

+- Contribuir para o 🤗 Diffusers e precisa testar mudanças no código.

+

+Clone o repositório e instale o 🤗 Diffusers com os seguintes comandos:

+

+```bash

+git clone https://github.com/huggingface/diffusers.git

+cd diffusers

+```

+

+

+

+```bash

+pip install -e ".[torch]"

+```

+

+

+```bash

+pip install -e ".[flax]"

+```

+

+

+

+Esses comandos irá linkar a pasta que você clonou o repositório e os caminhos das suas bibliotecas Python.

+Python então irá procurar dentro da pasta que você clonou além dos caminhos normais das bibliotecas.

+Por exemplo, se o pacote python for tipicamente instalado no `~/anaconda3/envs/main/lib/python3.8/site-packages/`, o Python também irá procurar na pasta `~/diffusers/` que você clonou.

+

+

+

+Você deve deixar a pasta `diffusers` se você quiser continuar usando a biblioteca.

+

+

+

+Agora você pode facilmente atualizar seu clone para a última versão do 🤗 Diffusers com o seguinte comando:

+

+```bash

+cd ~/diffusers/

+git pull

+```

+

+Seu ambiente Python vai encontrar a versão `main` do 🤗 Diffusers na próxima execução.

+

+## Cache

+

+Os pesos e os arquivos dos modelos são baixados do Hub para o cache que geralmente é o seu diretório home. Você pode mudar a localização do cache especificando as variáveis de ambiente `HF_HOME` ou `HUGGINFACE_HUB_CACHE` ou configurando o parâmetro `cache_dir` em métodos como [`~DiffusionPipeline.from_pretrained`].

+

+Aquivos em cache permitem que você rode 🤗 Diffusers offline. Para prevenir que o 🤗 Diffusers se conecte à internet, defina a variável de ambiente `HF_HUB_OFFLINE` para `True` e o 🤗 Diffusers irá apenas carregar arquivos previamente baixados em cache.

+

+```shell

+export HF_HUB_OFFLINE=True

+```

+

+Para mais detalhes de como gerenciar e limpar o cache, olhe o guia de [caching](https://huggingface.co/docs/huggingface_hub/guides/manage-cache).

+

+## Telemetria

+

+Nossa biblioteca coleta informações de telemetria durante as requisições [`~DiffusionPipeline.from_pretrained`].

+O dado coletado inclui a versão do 🤗 Diffusers e PyTorch/Flax, o modelo ou classe de pipeline requisitado,

+e o caminho para um checkpoint pré-treinado se ele estiver hospedado no Hugging Face Hub.

+Esse dado de uso nos ajuda a debugar problemas e priorizar novas funcionalidades.

+Telemetria é enviada apenas quando é carregado modelos e pipelines do Hub,

+e não é coletado se você estiver carregando arquivos locais.

+

+Nos entendemos que nem todo mundo quer compartilhar informações adicionais, e nós respeitamos sua privacidade.

+Você pode desabilitar a coleta de telemetria definindo a variável de ambiente `DISABLE_TELEMETRY` do seu terminal:

+

+No Linux/MacOS:

+

+```bash

+export DISABLE_TELEMETRY=YES

+```

+

+No Windows:

+

+```bash

+set DISABLE_TELEMETRY=YES

+```

diff --git a/docs/source/pt/quicktour.md b/docs/source/pt/quicktour.md

new file mode 100644

index 000000000000..1fae0e45e39f

--- /dev/null

+++ b/docs/source/pt/quicktour.md

@@ -0,0 +1,314 @@

+

+

+[[open-in-colab]]

+

+# Tour rápido

+

+Modelos de difusão são treinados para remover o ruído Gaussiano aleatório passo a passo para gerar uma amostra de interesse, como uma imagem ou áudio. Isso despertou um tremendo interesse em IA generativa, e você provavelmente já viu exemplos de imagens geradas por difusão na internet. 🧨 Diffusers é uma biblioteca que visa tornar os modelos de difusão amplamente acessíveis a todos.

+

+Seja você um desenvolvedor ou um usuário, esse tour rápido irá introduzir você ao 🧨 Diffusers e ajudar você a começar a gerar rapidamente! Há três componentes principais da biblioteca para conhecer:

+

+- O [`DiffusionPipeline`] é uma classe de alto nível de ponta a ponta desenhada para gerar rapidamente amostras de modelos de difusão pré-treinados para inferência.

+- [Modelos](./api/models) pré-treinados populares e módulos que podem ser usados como blocos de construção para criar sistemas de difusão.

+- Vários [Agendadores](./api/schedulers/overview) diferentes - algoritmos que controlam como o ruído é adicionado para treinamento, e como gerar imagens sem o ruído durante a inferência.

+

+Esse tour rápido mostrará como usar o [`DiffusionPipeline`] para inferência, e então mostrará como combinar um modelo e um agendador para replicar o que está acontecendo dentro do [`DiffusionPipeline`].

+

+

+

+Esse tour rápido é uma versão simplificada da introdução 🧨 Diffusers [notebook](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/diffusers_intro.ipynb) para ajudar você a começar rápido. Se você quer aprender mais sobre o objetivo do 🧨 Diffusers, filosofia de design, e detalhes adicionais sobre a API principal, veja o notebook!

+

+

+

+Antes de começar, certifique-se de ter todas as bibliotecas necessárias instaladas:

+

+```py

+# uncomment to install the necessary libraries in Colab

+#!pip install --upgrade diffusers accelerate transformers

+```

+

+- [🤗 Accelerate](https://huggingface.co/docs/accelerate/index) acelera o carregamento do modelo para geração e treinamento.

+- [🤗 Transformers](https://huggingface.co/docs/transformers/index) é necessário para executar os modelos mais populares de difusão, como o [Stable Diffusion](https://huggingface.co/docs/diffusers/api/pipelines/stable_diffusion/overview).

+

+## DiffusionPipeline

+

+O [`DiffusionPipeline`] é a forma mais fácil de usar um sistema de difusão pré-treinado para geração. É um sistema de ponta a ponta contendo o modelo e o agendador. Você pode usar o [`DiffusionPipeline`] pronto para muitas tarefas. Dê uma olhada na tabela abaixo para algumas tarefas suportadas, e para uma lista completa de tarefas suportadas, veja a tabela [Resumo do 🧨 Diffusers](./api/pipelines/overview#diffusers-summary).

+

+| **Tarefa** | **Descrição** | **Pipeline** |

+| -------------------------------------- | ------------------------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------- |

+| Unconditional Image Generation | gera uma imagem a partir do ruído Gaussiano | [unconditional_image_generation](./using-diffusers/unconditional_image_generation) |

+| Text-Guided Image Generation | gera uma imagem a partir de um prompt de texto | [conditional_image_generation](./using-diffusers/conditional_image_generation) |

+| Text-Guided Image-to-Image Translation | adapta uma imagem guiada por um prompt de texto | [img2img](./using-diffusers/img2img) |

+| Text-Guided Image-Inpainting | preenche a parte da máscara da imagem, dado a imagem, a máscara e o prompt de texto | [inpaint](./using-diffusers/inpaint) |

+| Text-Guided Depth-to-Image Translation | adapta as partes de uma imagem guiada por um prompt de texto enquanto preserva a estrutura por estimativa de profundidade | [depth2img](./using-diffusers/depth2img) |

+

+Comece criando uma instância do [`DiffusionPipeline`] e especifique qual checkpoint do pipeline você gostaria de baixar.

+Você pode usar o [`DiffusionPipeline`] para qualquer [checkpoint](https://huggingface.co/models?library=diffusers&sort=downloads) armazenado no Hugging Face Hub.

+Nesse quicktour, você carregará o checkpoint [`stable-diffusion-v1-5`](https://huggingface.co/runwayml/stable-diffusion-v1-5) para geração de texto para imagem.

+

+

+

+Para os modelos de [Stable Diffusion](https://huggingface.co/CompVis/stable-diffusion), por favor leia cuidadosamente a [licença](https://huggingface.co/spaces/CompVis/stable-diffusion-license) primeiro antes de rodar o modelo. 🧨 Diffusers implementa uma verificação de segurança: [`safety_checker`](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) para prevenir conteúdo ofensivo ou nocivo, mas as capacidades de geração de imagem aprimorada do modelo podem ainda produzir conteúdo potencialmente nocivo.

+

+

+

+Para carregar o modelo com o método [`~DiffusionPipeline.from_pretrained`]:

+

+```python

+>>> from diffusers import DiffusionPipeline

+

+>>> pipeline = DiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5", use_safetensors=True)

+```

+

+O [`DiffusionPipeline`] baixa e armazena em cache todos os componentes de modelagem, tokenização, e agendamento. Você verá que o pipeline do Stable Diffusion é composto pelo [`UNet2DConditionModel`] e [`PNDMScheduler`] entre outras coisas:

+

+```py

+>>> pipeline

+StableDiffusionPipeline {

+ "_class_name": "StableDiffusionPipeline",

+ "_diffusers_version": "0.13.1",

+ ...,

+ "scheduler": [

+ "diffusers",

+ "PNDMScheduler"

+ ],

+ ...,

+ "unet": [

+ "diffusers",

+ "UNet2DConditionModel"

+ ],

+ "vae": [

+ "diffusers",

+ "AutoencoderKL"

+ ]

+}

+```

+

+Nós fortemente recomendamos rodar o pipeline em uma placa de vídeo, pois o modelo consiste em aproximadamente 1.4 bilhões de parâmetros.

+Você pode mover o objeto gerador para uma placa de vídeo, assim como você faria no PyTorch:

+

+```python

+>>> pipeline.to("cuda")

+```

+

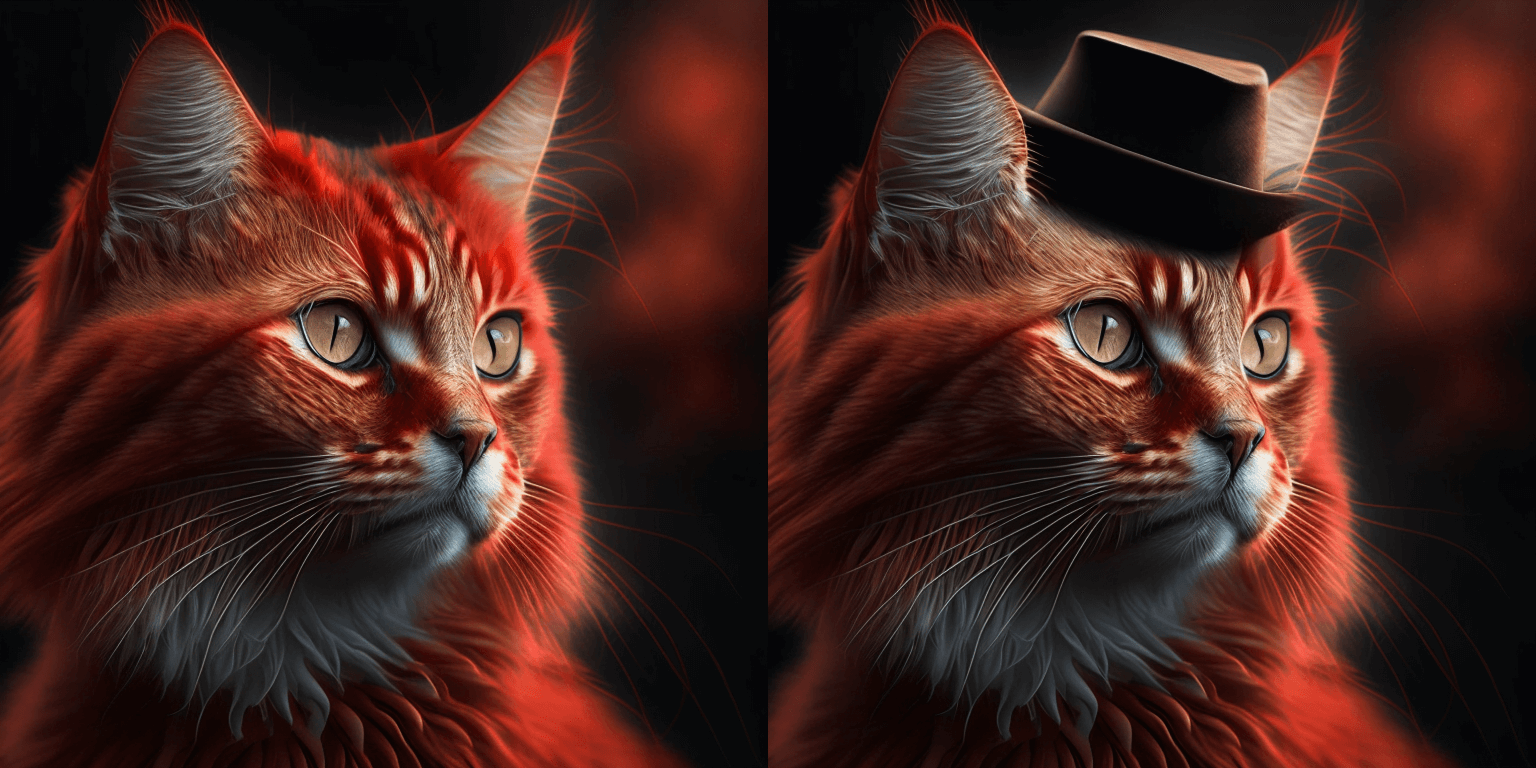

+Agora você pode passar o prompt de texto para o `pipeline` para gerar uma imagem, e então acessar a imagem sem ruído. Por padrão, a saída da imagem é embrulhada em um objeto [`PIL.Image`](https://pillow.readthedocs.io/en/stable/reference/Image.html?highlight=image#the-image-class).

+

+```python

+>>> image = pipeline("An image of a squirrel in Picasso style").images[0]

+>>> image

+```

+

+

+

+

+

+ |

+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+  +

+  +

+ +

+ +

+ +

+ +

+ +

+  +

+ +

+